You know how it goes. The cobbler's children have no shoes. The doctor neglects his own health. The hairdresser with the messy hair.

For a while, I was exactly that cobbler.

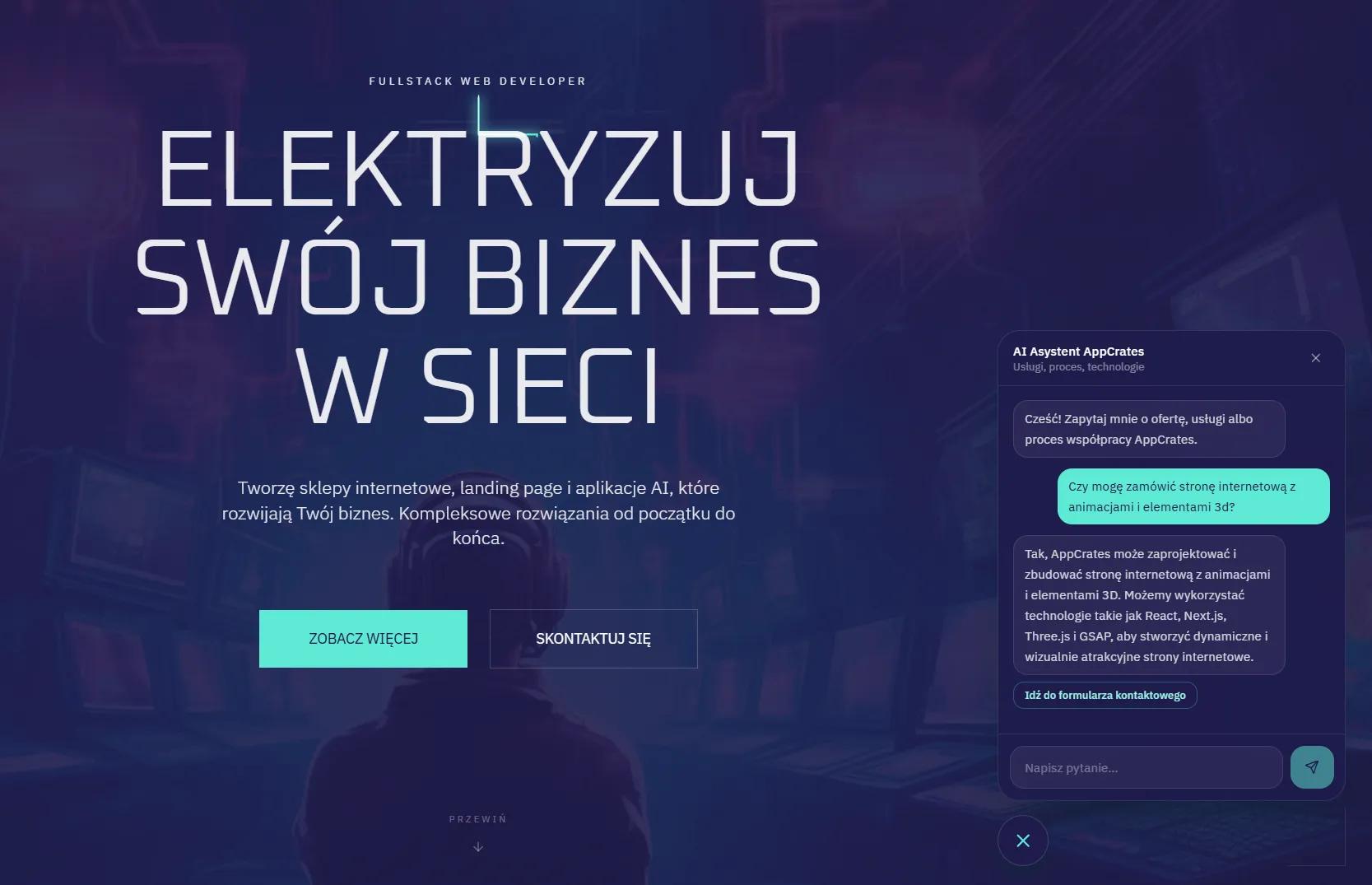

AppCrates offers AI implementations, RAG, chatbots, assistants built on company data. I build it, explain how it works, ship it for clients. But on my own landing page? Nothing. Zero. A contact form and an FAQ.

Classic.

I decided to fix that — and show you exactly what it looks like from the inside.

Why I Did It At All

It's not just about appearances, though that's an argument too. It's about the fact that if you don't use what you sell, that's a red flag. For a potential client who lands on your site and wants to ask something at 11PM — there's nobody there. The form sits waiting. Nobody reads emails at that hour.

An AI assistant solves exactly that problem. It answers questions about services, the collaboration process, technologies — based on real data from the site, without confabulation, without hallucinations.

And yes, I know — "without hallucinations" is a bold claim. I'll explain how I achieved that in a moment.

Architecture — RAG in a Nutshell

The catch with building LLM-based chatbots is that the model knows nothing about your company by default. It knows the world up to its training cutoff, but it doesn't know your services, your projects, or your pricing.

That's where RAG comes in — Retrieval Augmented Generation. In short: before the model responds, we first pull the relevant context from a database and inject it into the query. The model doesn't guess — it answers based on real data.

In my case, the context is built from:

- Content from Sanity CMS (services, projects, blog posts, the "about me" section)

- FAQ

- Privacy policy

- Company description

All of this lands in the system prompt as structured context. The model gets concrete facts and answers based on them. No making things up.

Security — No Cutting Corners Here

This is the part clients often don't see, but which is absolutely CRITICAL in any chatbot implementation that has access to data.

The problem is simple: if a chatbot has access to a CMS and someone types "delete all posts" or "change the pricing" — what happens?

With bad architecture: exactly what you asked for.

That's why I built 8 layers of protection, from the client side all the way down to the Sanity client architecture itself.

Read-Only Client

The Sanity client used by the chatbot has no write token. Literally — it cannot modify anything, even if it receives such an instruction. This is the foundation. Everything else is additional safety netting.

Middleware and HTTP Guards

The /api/chat endpoint accepts only POST and OPTIONS methods. GET, PUT, PATCH, DELETE — rejected at the middleware level, before they ever reach the application logic. Additional guards exist at the route handler level too — defense in depth, as they say.

Destructive Request Detection

A keyword list — in both Polish and English — that immediately blocks a request before it ever reaches the model. "Delete", "remove", "edit", "publish", "reset"... and their Polish equivalents. The user gets a refusal with an explanation. The model never even sees the request.

Prompt Engineering

The system prompt has clear rules baked in: don't perform mutating actions, answer only based on the provided context, don't agree to any attempts to bypass the rules. This is the last line of defense — if something slips through the previous layers, the model rejects the request on its own.

The result? A grep through the code won't find a single call to create(), patch(), delete(), or commit() in the chatbot route file. The architecture physically prevents writes.

Bilingual Support — PL and EN

The site handles two languages, so the chatbot had to as well. I implemented language detection at multiple levels.

On the client side — language from the application context. On the server side — fallback to content analysis: Polish diacritic characters, Polish stop words. Defaults to Polish if uncertain.

The entire RAG context is built separately for each language — separate section headers, separate descriptions, separate FAQ content. The cache operates per-language, so we don't pay double in Sanity queries.

The widget UI is fully localized too — every system message, placeholder, aria-label, title. Zero hardcoded Polish text in the code.

Rate Limiting — So Nobody Abuses It

10 requests per minute per IP on the server side. 15 requests per hour per browser on the client side. When the limit is exceeded — a localized error message, no crash, no 500.

A honeypot field in the form as additional bot protection.

How It Turned Out

The widget runs on the homepage, project detail pages, FAQ, and the privacy policy. On mobile it's full screen with 100dvh, on desktop a floating window. The open button hides on mobile when the chat is open — the X in the header takes over instead.

Build passed. TypeScript passed. It works.

But more important than the technical details is what a visitor can now do: ask about any service, about the collaboration process, about specific technologies — and get an answer instantly. No waiting for an email. No digging through FAQ.

What This Means for You

If you have a business and a website — an AI assistant built on your own data isn't a gimmick. It's a concrete sales tool that works 24/7, doesn't get sick, and never forgets what you offer.

The catch is that you do it once and do it right — with a well-thought-out security architecture, because a chatbot plugged into a CMS without proper safeguards is asking for trouble.

I went through all of this myself while building it for my own site and for the marketplace artovnia.com. I know what works, I know what can go wrong.

If you want a similar solution for your business — reach out. Happy to talk.